I promised last time to write about the other psychometrician I encountered last week. His name is Peter Merenda, and he’s something of a psychometrician’s psychometrician. He’s written a textbook about testing, along with another book on statistical analysis and about 250 articles in various journals. He’s won prizes, fellowships, awards. He founded the URI Psychology department in 1960, and led it too, retiring in 1984. Now, at the age of 90, he still keeps up with the literature — and the NECAP tests still rankle him.

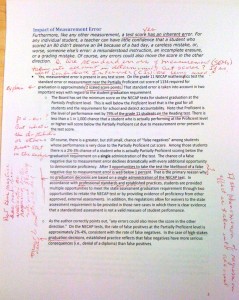

He was kind enough to sit down for an interview last weekend and to mark up some of my and the reply from the RIDE consultant, Charles DePascale. His years as a professor seem to have inculcated a deep love of red pen, as you can see from his markup:

So that’s a lot of red. What does he think of my critique of using the NECAP test as a graduation requirement? My suggestion was that the test is created with the expectation that lots of students will flunk, for perfectly valid statistical goals. I am deeply chagrined to say that he chided me… for not going nearly far enough. He said my critique was correct as far as it goes, but there is a far worse problem: validation. Those markups on the paper above say things like, “RIDE as user of NECAP is in violation of National Testing Standards“, and “the test scores have not even been validated for any purpose.”

Validating a test means to ask in a serious and disciplined way, what does the test actually measure? It usually means stepping outside the framework of the test itself to see how good the correlation is between test results and whatever it is you want to be measuring. For an employment test, you might try to compare job performance with test results (before making your hiring dependent on those test results, that is). For an intelligence test, you might compare test results with some other intelligence test. And for a graduation test, you might want to examine the test-takers and see, in some independent way, whether the students who pass deserve to graduate and whether the students who flunk do not.

For an employment test, Merenda was able to cite a list of court cases that essentially make it illegal to use a written employment test that hasn’t been validated in a rigorous way. (He was an expert witness in several of those cases.) The idea is that it’s illegal to use a test as a bar to employment if that test has nothing to do with the job in question. The result of these cases is that the burden is on employers and testing companies to show that any test is relevant to the job in question, and to show it in a way that can withstand legal scrutiny. So they do, and there is a long list of American Psychological Association Standards that dictate exactly how.

The NECAP technical documentation does indeed contain a “Validation” chapter, indicating that at least some of the test designers understood this to be an obligation. But the obligation is honored in the breach, and the chapter is essentially laughable. There is, for example, a collection of graphs that show results of the NECAP against answers to a few of the survey questions that are asked at each test. For example, you can see on page 84 a graph comparing performance on the 11th grade math NECAP with the survey question, “How often do you do homework?” At the bottom of the same page is a graph comparing the writing score with the survey question, “How often do you write more than one draft of an essay?” While these are occasionally interesting, the word “superficial” comes to mind far more readily than the word “rigorous”.

To the test designers’ credit, there are two graphs that compare a student’s performance on the 11th grade NECAPs with their grades. How did they get those grades? They asked students on the same survey for his or her most recent grade in reading and math. Self-reported data — of course it’s reliable. This whole chapter is sort of a feint in the direction of a validity study, but as actual data in support of the test, it barely rises to the level of risible, let alone to a level that might be defensible in a court.

How about a study of how well students do in geometry class compared with NECAP performance? Maybe you could even track how different the scores would be if the students took geometry before or after the NECAP? Maybe a correlation between NECAP scores and being required to do remedial work in college? A correlation between scores and likelihood of dropping out? Or a longitudinal study of job success compared with NECAP scores? Or all of the above? There are lots of ways you could think of to answer the question of how well the NECAP does at evaluating a student’s readiness to leave high school, but this work was apparently never done, or if it was, it’s not reported in the chapter entitled “Validity” where the curious can find it.

One hundred years ago, Henry Goddard, who went to school at Moses Brown and was a member of the first generation of psychological testers, persuaded Congress to let him set up an IQ testing program at Ellis Island that eventually proved that most immigrants were “morons.” (He coined the term.) During World War I, intelligence tests used to select officers were later shown to have profound biases in favor of native-born recruits and those of northern European extraction, which is another way to say that lots of Italian-American soldiers were unjustly denied promotions. For decades, misused IQ tests classified tremndous numbers of healthy children as disabled, or mentally deficient — well into the 1960s and 1970s. The history of testing in America is littered with misuses of testing that have had profound and unjust effects on millions of adults and children. Does the available evidence about the NECAP test persuade you that we are not in the middle of one more chapter of this terrible history?

Decisions made about testing can have huge impacts on young people who deserve far better than we’re giving them. To quote a prominent Rhode Island education official in a slightly different context, it is an outrageous act of irresponsibility to impose a test as a graduation requirement without doing the homework necessary to support its use. Again, our children deserve much better than this, and it’s hard to understand why we can’t seem to give it to them.

It is doubtless just a sign of my own weakness and vanity that I not simply stop this column here. However, over the past couple of weeks, my own qualifications to comment on the NECAP test have been part of the public conversation, such as it is, so I can’t really help myself. Here’s one more bit of Merenda’s grading:

If you can’t read it, it says, “Sgouros may not be a professional psychometrician, but he does resemble one in his writings!” Because I already know I’m a nerd, I’m choosing to take this as a compliment.

Along with Merenda, I have also heard from psychometricians and their ilk in three other states over the past couple of weeks. And Bruce Marlowe, an education psychology professor at Roger Williams weighed in on the editorial page of yesterday’s Providence Journal. So far, the score is that they’re all with me, except for the ones who suggest that I haven’t gone far enough. Against them is one guy, who is paid by RIDE, and who apparently has to misconstrue what I said in order to argue against it, in an unsigned document. And an education commissioner. I report, you decide, as they say.

Deprecated: Function get_magic_quotes_gpc() is deprecated in /hermes/bosnacweb08/bosnacweb08bf/b1577/ipg.rifuturecom/RIFutureNew/wp-includes/formatting.php on line 4387

Deprecated: Function get_magic_quotes_gpc() is deprecated in /hermes/bosnacweb08/bosnacweb08bf/b1577/ipg.rifuturecom/RIFutureNew/wp-includes/formatting.php on line 4387

Deprecated: Function get_magic_quotes_gpc() is deprecated in /hermes/bosnacweb08/bosnacweb08bf/b1577/ipg.rifuturecom/RIFutureNew/wp-includes/formatting.php on line 4387