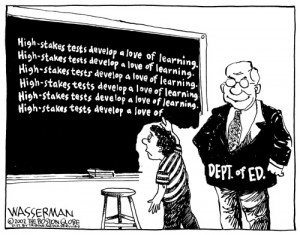

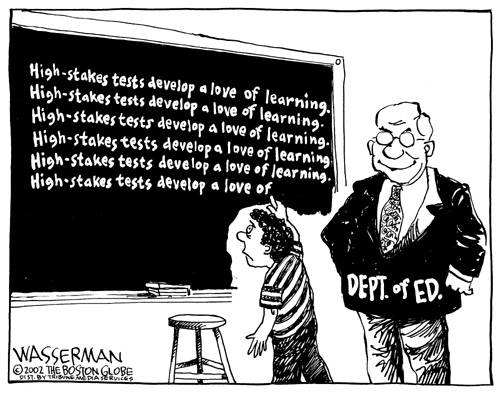

Tom Sgouros has raised compelling reasons against using the NECAP as a graduation requirement, including the distorting effect of the NECAP on curriculum. The most obvious impact—accelerated by school budgets under intense fiscal pressure—is the elimination of subjects not on the test: music, arts, and career tech are among the endangered species.

Tom Sgouros has raised compelling reasons against using the NECAP as a graduation requirement, including the distorting effect of the NECAP on curriculum. The most obvious impact—accelerated by school budgets under intense fiscal pressure—is the elimination of subjects not on the test: music, arts, and career tech are among the endangered species.

There is another important point that hasn’t received as much attention, the “dumbing down” effect of the NECAP. Here, people talk about how the curriculum is turning into “test prep” and that test prep is boring and meaningless.

Is test prep—instruction keyed to the NECAP–really boring and meaningless? One way to answer this question is to look into the NECAP technical report, which specifies both the content and the level of intellectual difficulty on the test. (pages 6 & & of the current NECAP technical report). There, intellectual difficulty is described in terms of levels of “Depth of Knowledge”, a scheme developed by Norman Webb. The technical report supplies the following descriptors of Levels 1 and 2 for reading:

- Level 1: This level requires students to receive or recite facts or to use simple skills or abilities…Items require only a shallow understanding of text presented…

- Level 2: This level includes the engagement of some mental processing beyond recalling or reproducing a response…Some important concepts are covered but not in a complex way

Neither of these levels require what is commonly described as “thinking”, that is, understanding what is in a text and then doing something with it—analyzing it, connecting it to another text, placing it in context, or any number of valuable intellectual activities. Instead, items at these levels require students to parrot back what is in a passage. And ultimately parroting is boring and meaningless.

How much of the grade 11 NECAP tests for the ability to parrot? On page 7, the report tells us that 23% of the grade 11 reading items are at level 1 and 69% are at level 2–over 90% of the test is at a very low level of cognitive complexity. The situation in math is similar. When teachers use released NEAP items as their cue for what to teach, it is no wonder that the entire intellectual level of teaching and learning is dumbed down. So the rumors are true and there is real evidence explaining why the NECP is a force dumbing down teaching and learning.

But the fact that the NECAP is at a low level of intellectual sophistication seems to clash with the fact that many students “fail “ the test—nearly 40% of the state in math. But if you think of parroting as singing back the song you heard, it’s obvious some songs are easier to sing back than others, so in reading just make the grammar more complicated, the vocabulary more unfamiliar and the song gets harder to parrot. Furthermore, test makers can boost difficulty by giving a choice of several very similar songs as right answers. In other words, a relatively simple intellectual task can be made artificially more difficult by the wiles of test construction. Let me know if you detect anything morally suspect in this.

The second reason Tom gives (March 23 RIFuture.org) for not using the NECAP has to do with the purpose it serves. Tom points out that the NECAP was designed to measure as wide a spectrum of achievement as possible in schools. There is a lot of diversity in achievement in a school, so the test needs to include items that are very hard and very easy–and everything in between—to measure that diversity. This is very different from a test designed to see whether a student has mastered a body of knowledge—such as that taught in a course—or not. For this kind of decision, a test requires items that measure the required body of knowledge at the required level of difficulty. Instead of a full spectrum of item difficulty, items would be tightly clustered at the passing level.

Which of these two tests seems most appropriate to making a determination of whether a student has mastered the minimum amount required to graduate? Clearly the second kind—if your primary interest is in whether a student has achieved the required minimum competencies for graduation, you would cluster your items closely around this cut-point so you could make that determination as accurately as possible.

We know (see http://www.transparency.ri.gov/contracts/bids/3296220_7058821.pdf) that RIDE intends to extend—at a cost of over one million dollars–its testing contract with Measured Progress to write a test that will be used in 2015 to determine whether seniors will graduate. We should ask whether this was a smart use of money.

The answer is basically “No”. The contract extension calls for Measured Progress to produce another edition of the NECAP. As a general standardized test, the NECAP spreads its items across all four performance levels, including proficient (level 3) and proficient with distinction (level 4).

But this test will only be used to make only one judgment: whether a student is at level 1 substantially below proficient, or not. The only items that need to be on this test are those that measure whether a performance is at level 1 or at level 2—this, after all, is what determines whether a student graduates.

From the NECAP blueprint we know that only 28 of the 52 total items on the reading test and 30 of 64 items on the math test measure level 1 and 2 performance. In other words, a test half the length of the NECAP could do the job just as well.

In fact, a different test could do a better job by adding a few more level 1 and level 2 items in each of the content areas measured by the test to increase the reliability of the cut score. However, it seems that this kind of strategic thinking is not being done at RIDE, the contract was just routinely rolled over—at great cost–once again.

Deprecated: Function get_magic_quotes_gpc() is deprecated in /hermes/bosnacweb08/bosnacweb08bf/b1577/ipg.rifuturecom/RIFutureNew/wp-includes/formatting.php on line 4387

Deprecated: Function get_magic_quotes_gpc() is deprecated in /hermes/bosnacweb08/bosnacweb08bf/b1577/ipg.rifuturecom/RIFutureNew/wp-includes/formatting.php on line 4387

Deprecated: Function get_magic_quotes_gpc() is deprecated in /hermes/bosnacweb08/bosnacweb08bf/b1577/ipg.rifuturecom/RIFutureNew/wp-includes/formatting.php on line 4387