Recent remarks in the Journal by the Commissioner of Education point a finger away from the NECAP and toward math education in this state, “Gist said that math is the problem, not the NECAP. ‘This is not about testing,’ she said. ‘It’s about math. It’s about reading.” (Jan. 31, 2014).

A statement like this puts everyone on notice. It tells our students they had better try harder; it tells our teachers they need to stay on track and get better results; and it tells our schools they need to raise their test scores. The subtext of the statement is that there is a big crises and just about everyone in the school system is to blame.

And just behind this subtext is the further ominous and obvious subtext that everyone in the schools needs to be held accountable until we get out of this mess.

But what kind of a mess are we in? What if our low math scores are the result of how we measure math instead of how we teach math? If that is the case, there is much less of a crises and the argument for holding everyone to high stakes accountablity–students don’t graduate, teachers get fired, schools get taken over–

has much less traction.

Since a lot rides on the answer to this question—is it the way we teach math or is it the way we measure math?—it’s worthwhile trying to answer it.

One way to go about this is to compare the performance standards set by different tests. A performance standard is sometimes expressed as a grade level, as in, “the proficiency level of the grade 11 NECAP is set at a ninth grade level”. In this case, a student demonstrating proficiency would show us that he or she has mastered the expectations of a student completing ninth grade. That is, the student would get most of the questions with ninth grade content and ninth grade difficulty right, but would get many fewer questions set at higher levels of difficulty or questions covering topics not usually taught until tenth grade or later.

The way this would show up on a test would be in the average score of the students taking the test—a test set at ninth grade proficieny would have a higher average score than a test set at the eleventh grade proficiency level if they are taken by the same group of students. That makes sense–the eleventh grade standard for proficiency is harder than the ninth grade level because students have covered more content and developed stronger skills.

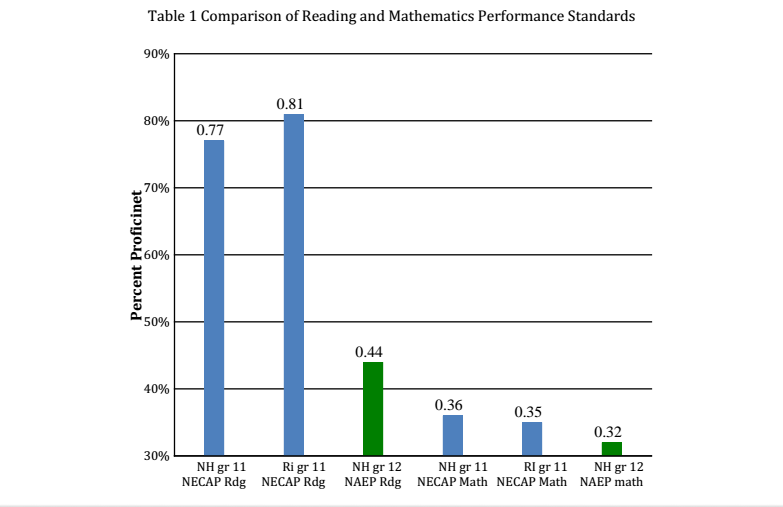

Back to the basic question—is it the way we teach math or the way we measure math? If we look at the way the NECAP measures reading, we can see that in the two states that take the test in grade 11, New Hampshire and Rhode Island, about 80% of students achieve proficiency. If we say 80% achieving proficiency indicates the test is at an eleventh grade level, then we have to wonder about the NAEP results students in these states achieve because less than half achieve proficiency.

We then have to ask ourselves, what performance standard is NECAP using? Whatever it is, it is much lower than the performance standard NAEP uses because a much higher percentage of students pass. In fact. over 80% more students pass NECAP than pass NAEP, so you can think of the NECAP performace standard as almost twice as easy as the NAEP performance standard. The tests are using different performance standards.

If you look at math, the results are startlingly different—here the percentages passing NECAP and NAEP as very comparable, meaning both tests use the same performance standard. And if you look at the NAEP reading and math performance standards, they are pretty comparable, with reading a little higher than math.

It looks like NAEP, the national measuring stick, uses about the same performance standard for reading and math while the NECAP does not.

Now, you can argue that NECAP has set the math performance standard right and has used a reading standard that is too easy. Then, of course, we would have a reading and a math problem instead of just a math problem.

But either admitting the math standard is too hard or the reading standard is too easy would mean admitting that something is wrong with the way NECAP standards have been set, something the Department of Education and the Commissioner have steadfastly denied.

I think that, at heart, they have denied such an obvious fact because it is too costly to their policy agenda to admit that anything could be wrong with the tests.

To do so would be to cast doubt on the expertise of the test designers who are the ultimate source of authority in the accountability debate. If test designers are wrong and tests are fallible, then how we measure students, teachers and schools is up for grabs. RIDE loses its top down leverage.

In the same article, Gist said, “Now is not the time to rethink our strategy.”

“Holding students accountable is really important,” she said. “We cannot reduce expectations.” The Chairman of the Board of Education, Eva Mancuso echoed the thought, “We are on the right course.” This sounds like a comment from the bridge of the Titanic.

Deprecated: Function get_magic_quotes_gpc() is deprecated in /hermes/bosnacweb08/bosnacweb08bf/b1577/ipg.rifuturecom/RIFutureNew/wp-includes/formatting.php on line 4387

Deprecated: Function get_magic_quotes_gpc() is deprecated in /hermes/bosnacweb08/bosnacweb08bf/b1577/ipg.rifuturecom/RIFutureNew/wp-includes/formatting.php on line 4387

Deprecated: Function get_magic_quotes_gpc() is deprecated in /hermes/bosnacweb08/bosnacweb08bf/b1577/ipg.rifuturecom/RIFutureNew/wp-includes/formatting.php on line 4387